When execution becomes cheap, the assumptions behind it become expensive. The current conversations in AI are about capabilities: what agents can do, how fast they can code, how many tasks they can handle. The real problem is whether the context they execute within is true.

Get the context right and an agent produces correct, domain-specific output at scale. Get it wrong and you get something worse than a bad answer: a confident bad answer. Hallucination is primarily a context problem, and solving it requires models optimized for different rewards than the ones leading the benchmarks today.

Truth Has Always Been Designed

Truth, in practice, has never arrived clean. Events happen, but the mechanism that turns events into something people act upon is always shaped by the subjectivity of those who perceive them, and the incentives of the authors and authorities who share them. By the time a truth reaches you, it has been selected, compressed, and framed.

Chomsky spent decades showing that mass media doesn't report reality; it manufactures consent by selecting which facts matter, which framings are acceptable, which questions are legitimate. Editors choose, sources are filtered, repetition creates consensus. The facts may be accurate, but the selection is inherently editorial.

Harari argues that what separates humans from every other species isn't intelligence but the ability to coordinate through shared fictions: money, nations, corporations, legal systems, religions. None are grounded in objective reality. They survive because collective agreement reinforces them, institutions encode them, and over time the agreement hardens into something that feels permanent. That's not a weakness. It's the mechanism that enables millions of strangers to cooperate. Truth, at civilizational scale, is a coordination protocol.

These coordination protocols are the primitives of our society. And they're everywhere. History books are curated timelines shaped by the objectives of their authors and authorities. Legal codes are codified opinions that a society agreed to treat as rules. Scientific consensus is the best available approximation, explicitly designed to be overwritten when better evidence arrives. Even mathematics, the most rigorous subset of science, operates within axioms that are chosen, not proven. Gödel showed that this is a permanent structural feature: no sufficiently powerful formal system can prove its own foundations.

None of this makes truth meaningless. These primitives abstract away the subjectivity of truth, and enable societies to scale across time, knowledge and actions. Without shared fictions, you can't have cities. Without legal codes, you can't have commerce. Without scientific consensus, you can't have medicine. Each layer compresses the truth beneath it into something usable. That compression is what makes coordination possible at the next level of scale. Truth is a design problem. It always has been. Until now, humans carried the design implicitly.

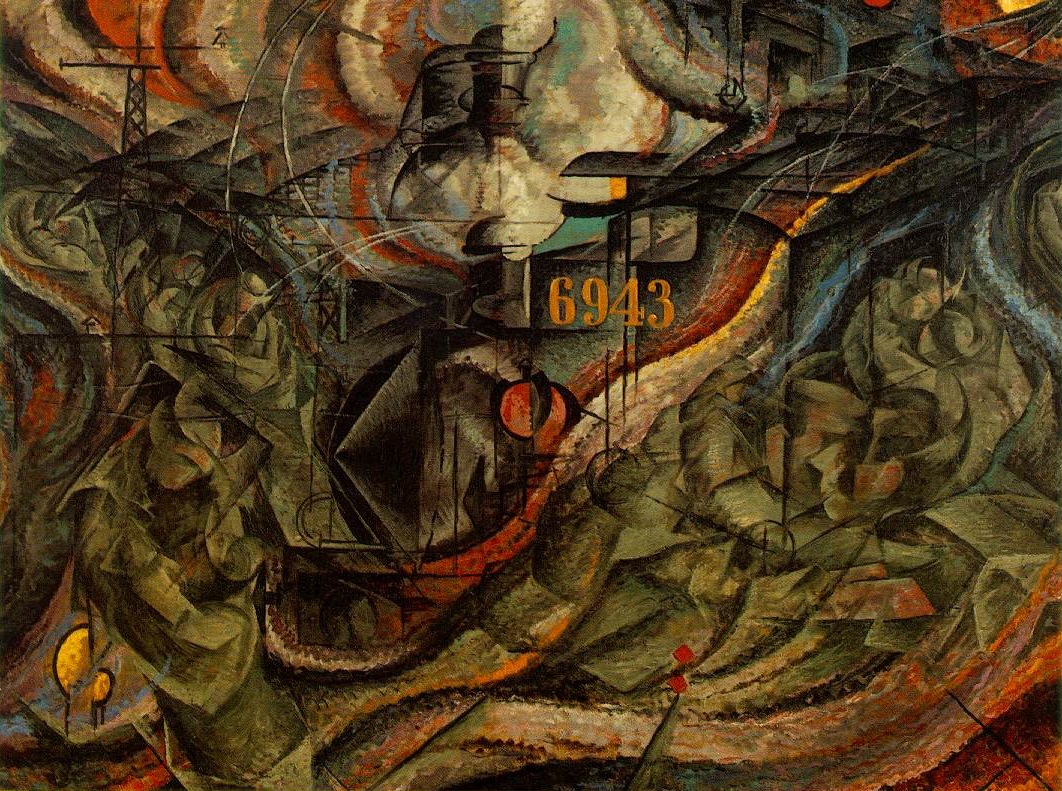

Umberto Boccioni, States of Mind II: Those Who Go (1911).

The Same Pattern, Three Times

This structure appears wherever complex systems need to grow beyond the capacity of a single actor. Designing these abstractions well defines the upper limit of a system, whether that system is software, a society, or a startup.

In computer science, this phenomenon is explicit and well-studied. Machine code is incomprehensible at scale. Assembly languages compress it into something a human can read. High-level languages compress further. Frameworks, libraries, and APIs compress again. Each layer hides the complexity beneath it and exposes a clean interface to the layer above. No single programmer could build a modern application from transistors. The abstraction stack makes it possible, and primitives are the current frontier of that progression.

In business, the same pattern operates through culture. Ben Horowitz describes culture as "the set of assumptions your employees use to resolve ambiguity." Culture is a compression layer: it takes the full complexity of "how should we operate" and distills it into shared axioms that every employee can apply without re-deriving from first principles. When someone says "that's not how we do things here," they're invoking a pre-loaded abstraction. Truth that was engineered into the organization.

Strong culture compounds the same way good abstractions do. When the axioms are clear, decisions are faster, alignment is cheaper, and people at the edges of the organization can act autonomously because they share the same assumptions. Weak culture forces every decision upward because nobody trusts the default context. Contradictory culture is worse: different parts of the organization operate on conflicting assumptions, producing not slowness but active interference. Teams optimize against each other. Decisions get relitigated. Energy goes to resolving conflicts that shouldn't exist. In software terms, a dependency conflict at the foundation layer. Everything built on top inherits the instability.

The resultant force of aligned assumptions vs misaligned ones.

Culture is one layer of organizational truth. Beneath it lies something deeper: domain knowledge. The accumulated expertise about how a specific industry actually works, where the edge cases hide, which assumptions hold and which crack under pressure. This is arguably the world's highest-priced asset. It's the reason ASML can charge what it charges for lithography machines, the reason TSMC's yield rates are unreachable by competitors, the reason Nvidia's CUDA ecosystem creates lock-in that no amount of hardware competition can break.

This knowledge isn't a static snapshot. It's a derivative. ASML's competitive advantage isn't what they know right now but the rate at which they learn: the speed at which new process knowledge gets structured, validated, and embedded into the next generation of machines. A competitor can't catch up by hiring ASML's engineers, because the advantage isn't stored in any individual. It's stored in the system: the feedback loops, the institutional processes, the structured and continuously updated understanding of how extreme ultraviolet lithography actually behaves at the edge of what physics allows. That system is what converts expertise into compounding returns. Not a moat in the traditional sense. A function that accelerates the company while competitors operate on last year's assumptions.

These companies have better structured knowledge about how to build, operate, and improve their products. That knowledge is embedded in processes, tooling, institutional memory. Almost none of it is written down in a way that an agent could use.

The moat is the function, not the output.

Agents Make the Implicit Explicit

Hand a task to a human employee and they automatically add implicit context: the client's history, the industry norms, the regulatory quirks, the unwritten rules. They don't consult a knowledge base for every decision. They just know.

Hand the same task to an agent and every assumption needs to be encoded, every domain truth structured, verified, and made accessible. The agent has no implicit context. Only what you give it.

This is the hardest and most valuable thing about building with AI.

Hardest because most organizations don't know what they know. The knowledge lives in people's heads, in email threads, in the accumulated intuition of senior employees who've been there for decades. Most companies that attempt extraction underestimate the effort by an order of magnitude. And the process surfaces contradictions: assumptions that different departments treat as true but that directly conflict, policies updated in one system but not another, knowledge that was correct three years ago and has silently drifted. In a human organization, these get papered over through informal negotiation. In an agent-powered organization, they produce failures.

But making knowledge explicit is the most valuable thing you can do, because once externalized, you unlock operations that were structurally impossible before. Scale: one knowledge base serves a thousand agents instead of one employee. Version: update the source of truth once and every agent downstream is immediately correct. Compose: combine knowledge from different domains, jurisdictions, or clients into new configurations. Audit: trace any decision back to the specific piece of context that produced it.

Before agents, knowledge was embodied in people and transmitted through apprenticeship, meetings, and osmosis. With agents, knowledge must be externalized, structured, and maintained as a living system. The companies that do this well compound faster than any technology moat: their agents get smarter with every engagement while their competitors' agents stay general.

A well-maintained knowledge base scales, a weak one stifles, a contracting one kills.

What Happens When Context Is Wrong

The asymmetry is important. Bad code produces bugs that are usually visible: the feature doesn't work, the page doesn't load, the test fails. Bad context produces errors that are invisible: the output looks correct, passes review, and implements the wrong assumption. The agent didn't hallucinate, but reasoned correctly from incorrect premises.

This is why the hallucination conversation is mostly misdirected. The focus is on model-level failures: the model made something up. The more dangerous failure mode is context-level: the model executed faithfully on knowledge that was outdated, incomplete, or wrong. No amount of model improvement fixes that. And the models that will fix the rest are optimized for different rewards than today's leaders: verification over generation, asking over answering, refusal over completion.

Quality assurance has to shift accordingly. In traditional software, the critical review is code review: does this code do what it should? In knowledge-powered organizations, the critical review is knowledge review: is the context that agents operate on accurate, current, and correctly scoped?

This requires domain expertise, not programming expertise: people who understand the substance of what agents are doing, not just the mechanics. The role isn't new. Domain experts have always reviewed work products. What's new is that the work product is the knowledge itself. They're reviewing premises, not conclusions.

Context Is the Moat

Every cycle in tech produces a moat thesis. In the PC era, it was distribution. In the internet era, it was network effects. In the cloud era, it was data. In the AI era, the moat is structured, verified, domain-specific context.

Models are commoditizing. The gap between the best and fifth-best foundation model shrinks every quarter, and software optimizations from quantization to mixture-of-experts are bringing frontier-grade inference to consumer hardware. The model layer is necessary but not defensible. A raw material, not a finished good.

Code is commoditizing faster. When agents write code at the speed of inference, code is no longer scarce. The scarcity is in the primitives and the assembly process. And when both are right, the vision extends beyond software into services: outcomes become a matter of inference, not labor.

But even the best primitives and the best assembly process produce unreliable output if the context is wrong. A factory with perfect machinery and contaminated raw materials produces defective products. The raw material of the knowledge factory is truth.

ASML doesn't license its process knowledge. TSMC doesn't open-source its yield optimization techniques. Nvidia doesn't publish the full depth of CUDA's compiler optimizations. These companies understood, long before the AI era, that structured domain knowledge is the most durable competitive advantage in technology. Everything else, the hardware, the software, the talent, can eventually be replicated. The accumulated, structured, verified understanding of how things actually work in a specific domain is nearly impossible to reproduce from outside, and is worth trillions.

The same structural logic applies to every knowledge company. The ones that win make their domain knowledge explicit, structured, and continuously verified. The only layer that doesn't commoditize.

Umberto Boccioni, States of Mind III: Those Who Stay (1911).

The models are the storm, the primitives are the architecture, the constraints are the constitution. This essay is about what goes into that constitution: not rules about what agents can or can't do, but the structured knowledge that determines whether what they do is grounded in reality. A constitution encodes a worldview: what's true, what matters, what the boundaries of acceptable action look like. The companies writing the best constitutions won't have the fastest models. They'll have the most trustworthy agents. And in a world where every company has access to the same storm, trust is what clients pay for.

Engineer the truth, or your factory runs on assumptions that nobody audited and everybody trusts. Engineering truth is the pattern that enables humanity to scale across time, knowledge, and actions. We're about to need much better answers.